Partial outage on 30 June 2023

Post categories

CEO

A partial network outage occurred at Fastmail on June 30th, 2023. Most did not notice, but it was severe for the directly affected 3-5% of our customers.

Whether or not you noticed, we’re truly sorry that this happened, and have a plan to avoid it happening again.

This article will get very technical about how our network works! We hope you find it useful.

A short summary

For about 19 hours, Fastmail and a portion of the internet were completely unable to communicate with each other, including a fraction of our customers, and also some email servers. This led to delayed email, some mail fetches being disabled, and if you were using one of those networks, Fastmail being entirely offline for you!

We had a single path for traffic out to the internet, and hence were unable to fix this ourselves. As a Reddit comment said, this wasn’t within our control, but it is our responsibility.

It is not acceptable to us that a subset of our customers were unable to access their email for so long, and also that we were unable to send email to a section of the internet.

To prevent this from happening again, we plan to connect directly into an additional transit provider, so we can select between multiple pathways for outbound data. Having this control will allow us to route around individual provider network issues, and be back online for everybody faster.

What, when, where, who

- What: access to all Fastmail services, including mail delivery, was broken - in particular, the ability for network packets leaving our network to reach their destination.

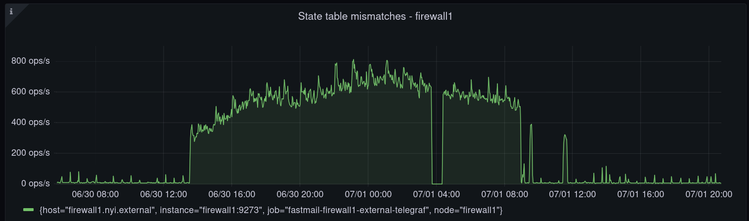

- When: starting approximately 03:00 UTC on June 30th and ending about 22:00 UTC on the same day, for a total outage time of 19 hours. We’re quite certain that the root cause of this outage is now fixed.

- Where: a fraction of the internet.

- Who: We estimate 3-5% of our customers, based on comparing server metrics to similar days.

We first became aware of the issue when our customers informed us via support tickets and social media. While we have monitors from multiple locations around the world, neither those monitors nor any of our staff were on networks that had issues. We could tell it was quite isolated, because some reported being able to access from phone but not home network, or vice versa. Also VPNs allowed some users to find a functioning pathway to our servers.

Since we couldn’t see the failing links ourselves, we relied on helpful customers on those networks who provided us with traceroutes — which then gave us some addresses to trace back to from our end and see where the traffic was dropping. We very much appreciate everybody who assisted us with datapoints!

Some history

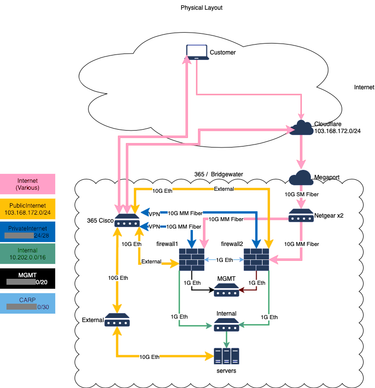

A few years ago, we moved from New York Internet’s Manhattan datacenter to their Bridgewater, New Jersey location. A bit later, this datacenter was sold to 365 Datacenters. We were using the IP address range 66.111.4.0/24 — a range which still belongs to the original NYI.

Thankfully, NYI were willing to lease us those addresses, because IP range reputation is really important in the email world, and those things are hardcoded all over the place — but it has caused us complications due to more complex routing. Over the past year, we’ve been migrating to a new IP range. We’ve been running the two networks concurrently as we build up the trust reputation for the new addresses.

In past years, we have had DDoS attacks against our service, and our previous DDoS protection services couldn’t keep us up through the worst of those attacks, so we’re now bringing all our incoming traffic via a service called MagicTransit from Cloudflare. It routes everything at the IP layer, so all the traffic is encrypted just like it was going over any other network — but Cloudflare has the capacity to soak up a lot of attack, and they’re really quick and proactive.

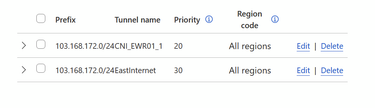

As part of the MagicTransit setup, we also added a second way into our network. Cloudflare can either route to us over our “Private Public” network IP on the 365 managed network, or via Megaport, a service which provides private virtual cables. We have a fiber link to Megaport, so we can route traffic from Cloudflare into our network without using the uplink to 365’s network. Traffic normally comes in that way, it’s a little faster, so we give it priority.

Megaport was up during the entire incident, so packets were getting in to our network reliably. It was outbound packets that weren’t all getting through.

Our current network structure

The Future

Work is now underway to select a provider for a second transit connection directly into our servers — either via Megaport, or from a service with their own physical presence in 365’s New Jersey datacenter. Once we have this, we will be able to directly control our outbound traffic flow and route around any network with issues.

Thanks for reading, and as always thank you for using Fastmail (both those who were unaffected, and those of you who have kept using Fastmail after that very bad day!)